The Agentic AI Era: Observability and Harness Engineering

.webp)

In today's IT landscape, observability—the ability to accurately understand system state and proactively resolve issues—has become increasingly critical. With the rapid advancement of AI, we're witnessing a paradigm shift: from passive monitoring that simply collects predefined data and fires threshold-based alerts, to intelligent monitoring, where AI autonomously diagnoses anomalies and proposes solutions.

In this article, we'll walk through the evolutionary stages of AI models, examine how "Agentic AI"—systems that think and act on their own—relates to monitoring platforms, and explore the technical direction that observability platforms like WhaTap should pursue.

The Evolution of AI Models: Generation → Reasoning → Action

To set the stage, it helps to understand how AI models have evolved to where they are today. Early generative AI began with systems like ChatGPT that could understand human language and hold natural conversations. These models served as conversational partners, answering questions based on vast stores of knowledge.

AI then entered its second evolutionary stage, moving beyond simple sentence generation to tackle complex problems through logical reasoning.

Today, AI has crossed a new threshold. Rather than passively answering questions, it now pursues goals on its own—we've entered the era of Agentic AI. Agentic AI refers to a next-generation class of systems that set their own goals, formulate plans, and act autonomously using external tools.

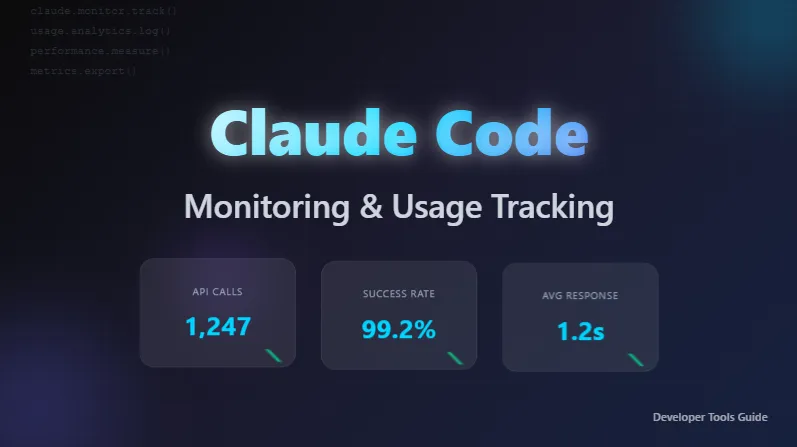

Agentic AI in Practice: Claude Code

Claude Code is a flagship example of Agentic AI, and it makes the practical gap with earlier models strikingly clear.

In the GPT era, if your code had a bug, the AI might suggest a fix or offer alternative code—but the work of copying that code, testing it in a real environment, and applying it fell squarely on the human engineer.

Today's agentic AI operates very differently. Given a problem, it reaches for the tools it needs: it reads and comprehends the entire codebase, plans and executes changes across multiple files, and runs tests to verify its work. When a test fails, it analyzes the error message, edits the code, and repeats the cycle until every test passes.

It goes further still. When errors surface after deployment, the agent doesn't just report back and wait for instructions—it monitors the CI pipeline, diagnoses the root cause, and even commits the fix itself, working independently until the goal is met.

Multi-Agent Architectures and Orchestration

The rise of Agentic AI has inevitably given birth to a new concept: the Orchestration Agent.

Much like a conductor leading an orchestra, an orchestration agent acts as a top-level manager, assessing the full situation and wielding the right tools for the job. Beneath this conductor sit specialized sub-agents—each focused on a particular domain such as coding, database querying, or security auditing—taking direction from above.

When a complex task arrives, it gets distributed to the relevant domain experts, maximizing the overall speed and efficiency of problem-solving. This multi-agent orchestration pattern is already being implemented through open-source frameworks like LangGraph, AutoGen, and CrewAI, with a thriving community of related projects on GitHub.

That said, applying this kind of architecture in real-world enterprise environments still has limits. Delivering performance reliable enough for enterprise customers to stake real decisions on requires a new dimension of technical thinking.

The Rise of Harness Engineering

To guarantee the integrity and trustworthiness of AI agent outputs in enterprise settings, a new concept emerged in early 2026: Harness Engineering.

To place harness engineering in context, we first need to understand the layers beneath it—prompt engineering and context engineering—along with their respective roles and limitations. Crucially, these three are not sequential replacements for one another. They address different scopes, and each higher layer builds on and includes the one below it.

Prompt Engineering vs. Context Engineering

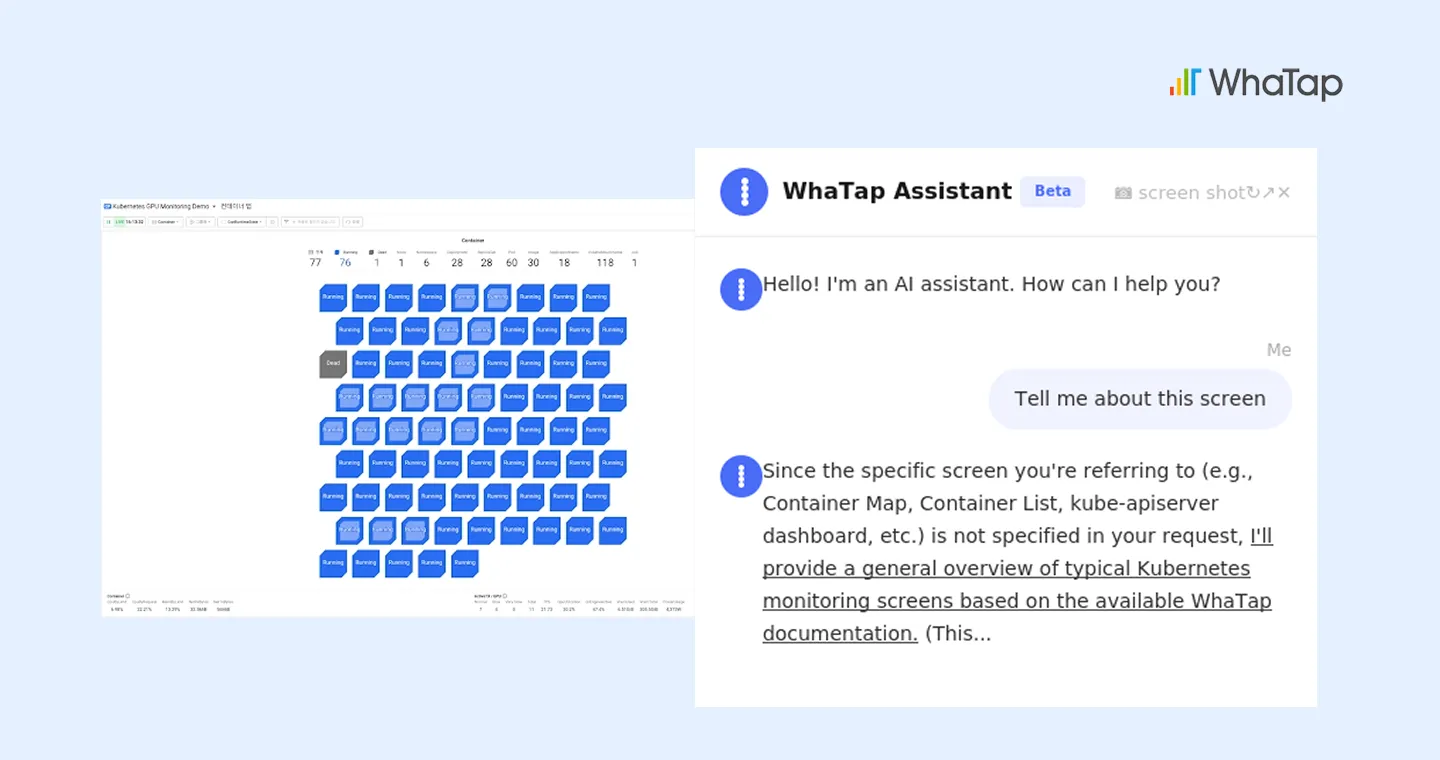

Prompt engineering is the approach currently used in features like WhaTap's built-in AI chatbot (Assistant).

Its strength lies in providing accurate, useful answers to user questions by drawing on large bodies of documentation, such as Kubernetes manuals. As a technique for optimizing AI response quality within a single conversational turn, it shines in document-based Q&A scenarios.

Context engineering takes this one step further.

In a context-engineered environment, the AI responds while also being aware of the context around the user: which screen they're looking at (for example, WhaTap's Container Map), what functionality that screen includes, and how it ties into the user's actual data.

At this stage, users no longer need to copy monitoring data and manually describe it to the AI. Instead, they receive feedback precisely tailored to their current situation, significantly boosting productivity.

The Limits and Risks of Context Engineering

Even context engineering, however, starts to show its limits in complex enterprise monitoring environments.

As the volume of context fed into the AI grows, language models lose information through compression and memory constraints, and they begin to violate rules that were supposedly established in advance.

A telling example: even when GPU metrics were being properly collected and displayed on WhaTap's Container Map, the AI lost track of the context and hallucinated that "the metric data appears not to be collected." In real-world monitoring and infrastructure operations, incorrect AI output translates directly into delayed incident response and poor decisions. What's needed is something stronger than mere context awareness—a robust control mechanism.

Harness Engineering: The Heart of AI Control Systems

This is precisely where harness engineering proves its worth.

The word "harness" originally refers to the tack used to control a horse—reins, a saddle, and so on—carrying the metaphor of a device that channels a powerful but unpredictable force in the right direction. In AI, harness engineering is the discipline of designing the entire control system that wraps around the powerful engine of an AI model.

Concretely, harness engineering encompasses four elements:

- Architectural boundaries that define the tools and permissions an AI agent can access.

- Guides (feedforward control) that steer the agent's behavior toward correct outcomes up front.

- Sensors (feedback loops) that verify the results of an agent's actions and prompt it to self-correct on failure.

- An observability layer that lets humans watch the agent's behavior in real time.

Together, these put the AI through an internal feedback loop before its final output ever reaches the user, allowing it to cross-check its own reasoning. When internal review catches a logical error, the AI is prompted to identify the cause and produce an improved answer—substantially boosting accuracy.

A concrete case in point: OpenAI's Codex team applied harness engineering principles to build a production application exceeding one million lines of code using only AI agents—without a human writing the code directly. It's a powerful demonstration that the design of the harness surrounding a model often matters more than the raw capability of the model itself.

WhaTap's Strategic Direction

So where does WhaTap go from here, amid this rapid evolution of agentic AI?

WhaTap has already laid a solid foundation with a prompt-engineering-based documentation-search chatbot, taking a confident first step into AI-driven monitoring.

Building on that foundation, the next stages of intelligent monitoring involve expanding into context engineering—feeding the AI the real-time context of the user's screen and data—and ultimately constructing a full control system rooted in harness engineering.

The long-term vision is for WhaTap to establish a distinct position within a future agentic ecosystem as an observability-specialized sub-agent—one that takes direction from a top-level orchestration conductor and owns the monitoring domain.

Imagine WhaTap's AI agents embedded organically beneath the user's higher-level systems, watching infrastructure around the clock and diagnosing anomalies autonomously. To make this real, WhaTap is systematically expanding its own harness-engineering-based validation layers: tool-access controls, behavior guides, result-verification sensors, and an observability layer for human operators. This is how WhaTap will continue to lead the industry in delivering monitoring results people can trust.

Conclusion: A Paradigm Shift in Observability

The union of agentic AI and observability platforms is more than a convenience upgrade—it's a shift that will reshape the very nature of IT infrastructure operations.

Once WhaTap's AI sub-agent system reaches full maturity, backed by the reliability and automation that harness engineering affords, it will serve as core infrastructure that both replaces and augments the repetitive work engineers perform today. The future of an intelligent IT ecosystem, driven jointly by agentic AI and observability, is one well worth watching.

.svg)

%201.svg)

%201.svg)

.webp)

.webp)